The Emperor's Algorithm: What Current Research Actually Says About AI in Education (And Why We’re Getting It Wrong)

A dissection of Stanford's 2026 Evidence Base on AI in K-12, with apologies to the hype machine. Read the full report here.

There is a religion forming around AI in education. Perhaps several. Like most religions, there are zealots and heretics, prophecies and apostates. The zealots are easy to spot and you probably know a few personally - they're the ones presenting at EdTech conferences with slides that feature stock-photo images of glowing neural networks and phrases like "personalised at scale" and "the end of one-size-fits-all learning." The heretics are equally recognisable, clutching their Vygotsky and their Dewey against the coming machine like Father Damien in “The Exorcist” movie with his fist closed tightly around the cross, insisting that nothing worth knowing can be quantified and nothing worth teaching can be automated.

Towards the beginning of my career I would probably have categorised myself as more of a technology zealot (or at least a proponent), but these days I can confidently say that I don’t fit into either camp. I've spent more than a decade working at the intersection of international education, online learning, and technology, and during this time I have developed an allergic reaction to both the hype and the hand-wringing. What I've wanted, alongside many colleagues in the field, was actual evidence. Rigorous, causal, properly designed studies that could cut through the noise and tell us something true.

Stanford's AI Hub for Education just published that evidence. Or at least some of it. Or rather: they published an honest account of how little (good) evidence we currently have, what the small amount we do have actually says, and why the gap between those two things should make every educator, policymaker, and EdTech investor sit down, pause, and take a deep breath.

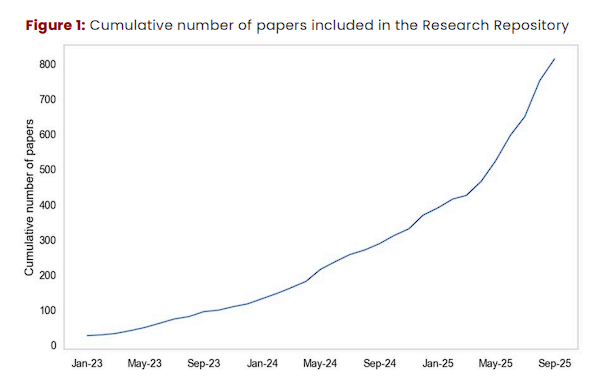

The Evidence Base on AI in K-12: A 2026 Review (I’m just going to call it “the report” throughout the rest of this article) is neither triumphant nor doom-laden - it is, thankfully, not an ideological document in any sense. It is a careful, conservative, academically neutral one. From over 800 academic papers on AI in K-12 education, the Stanford team identified exactly 20 that produce strong enough causal evidence to say anything definitive about how AI tools affect students and teachers (Fesler et al., 2026, p. 2). Twenty! In a field that is reportedly “transforming” everything.

What follows is my attempt to triangulate between:

- The myths everyone keeps repeating

- What those 20 studies actually show

- What I think it means

My professional career has centered around the belief that technology, used well, can genuinely elevate human potential in learning. That being said, this article is pure, personal opinion - warts and all - and the impression I expect you, the reader, to get is that I am highly sceptical of the current hype and all the breathless claims that EdTech vendors, AI “consultants”, and even national governments want you to believe.

Don’t get me wrong - I am actually highly optimistic about the future potential of all this technology. It is the current, often irresponsible, approach to implementing it that I have some problems with.

The real, current picture is more interesting, more nuanced, and more human than either the evangelists or the cynics would have you believe.

Before We Dive In: A Word on Evidence

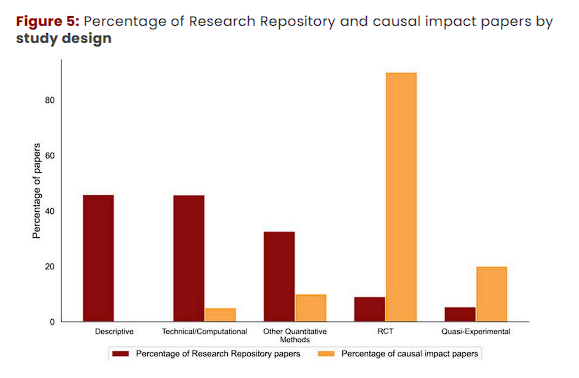

The report draws exclusively on Randomised Controlled Trials (RCTs) and Quasi-Experimental Designs (QEDs) - the gold standards of causal inference. This is very important where it concerns the conclusions that are being drawn, along with the conclusions that can’t be drawn. Most of the 800+ papers in the repository are descriptive or computational, together accounting for 92% of the literature (Fesler et al., 2026, p. 13). They tell us what's happening, not whether it's working. They describe how students use AI, not whether AI use causes better outcomes. That is one of the key distinctions to be aware of throughout all of this, and one that I see people often mistakenly - and sometimes intentionally - ignore.

When a school reports that students who used an AI tutoring tool performed better, that tells us almost nothing on its own. Were those students already higher achievers? Were they more motivated? Did they have more supportive home environments?

Hello, Mr. Correlation! Meet Mr. Causation - you guys are going to hate each other.

The report's insistence on causal evidence is methodologically honest and, in my opinion, exactly the right way to go about this. It cuts through the clutter and it forces us to admit how thin the ground beneath our feet actually is. Good! I think that discomfort is healthy. Let's lean into it. The first step on the road to knowing is knowing that you don’t know.

Myth #1: "AI is Transforming Student Learning RIGHT NOW (trust me bro)"

What everyone believes: AI tools are fundamentally changing how students learn, enabling personalised, adaptive, always-on educational experiences that were impossible before. The transformation is already underway (and if you aren’t on board, you’re already behind)!

What the Report says: The causal evidence for AI improving durable student learning - the kind that sticks when you take the tool away - is, at best, mixed and, at worst, very concerning.

Yes, AI tools reliably improve student performance while students have access to them. AI tools including automated feedback tools and general-purpose and tutoring AI chatbots improved student performance on practising maths proofs, maths practice problems, an economics exam, argumentative essay writing, and physics problems across multiple international experiments (Fesler et al., 2026, p. 17). But when you take the tool away and test what students actually learned, the data does not tell such a warm and fuzzy story.

The report found mixed impacts when students practised with AI tools and were then assessed independently, without AI support (Fesler et al., 2026, p. 2). One study found that high school students in Turkey who practised for an exam using a general-purpose AI chatbot performed worse on the final closed-book exam than peers who had no AI access at all, even though they had performed better during AI-supported practice (Bastani et al., 2025, as cited in Fesler et al., 2026, p. 18).

That’s right. The students who studied with AI performed worse than the control group. Not the same. Worse. You probably haven’t seen the results of this study on a presentation slide at an EdTech conference recently.

The pattern suggests students may be learning how to work with the tool rather than developing the underlying knowledge and reasoning skills needed for unaided performance (Fesler et al., 2026, p. 18). This is the distinction that should be keeping all of us up at night: tool-supported performance is not the same as durable learning. We have spent years building educational technology that makes students feel like they're learning. It’s fluent, it’s flashy, it’s assisted, the raw output is, technically speaking, quite high. But this approach is likely hollowing out the very cognitive processes that produce real understanding.

This is not a condemnation of AI in education. It is more like a diagnosis. And like most diagnoses, it points toward treatment rather than despair. The question isn't whether to use AI; it's how to use it such that the tool builds capacity rather than replaces it. That's a design question, a pedagogy question, and ultimately a philosophy-of-learning question. Technology and how it is developed is hugely important in all of this, but fundamentally this is not a technology question.

My take: The transformation narrative is wildly premature and, in its current form, dangerous. We are at the equivalent of the early days of the internet in schools, when putting computers in classrooms was itself considered transformative, before anyone asked what students were actually supposed to do with them. The cognitive and sociological and psychological impacts of AI on adolescents are shaping up to be much greater than that of the internet, and yet we are rushing headlong into the same trap.

Myth #2: "More AI Access = Better Learning Outcomes"

What everyone believes: The more students can interact with AI tools - more freely, more frequently, with fewer restrictions - the better their learning outcomes. Friction is the enemy. The future is frictionless. AI will be embedded in everything, everywhere, all the time - and that’s a good thing!

What the Report says: Unrestricted access to general-purpose AI may actively harm learning.

One experiment found that students using general-purpose AI chatbots to conduct research demonstrated lower-quality reasoning and argumentation compared to those using a traditional search engine (Stadler et al., 2024, as cited in Fesler et al., 2026, p. 17). Another study found that using general-purpose AI chatbots to help with a writing task reduced brain activity and led to weaker recall (Kosmyna et al., 2025, as cited in Fesler et al., 2026, p. 20).

Slow down and read that again because the wording is important. Reduced brain activity. Not "students found it easier" or "students reported lower engagement". Measurably less cognitive work was happening in students' brains when AI handled the heavy lifting. And then, predictably, their recall suffered.

Research on reading comprehension found high-school-aged students perceived AI use to be more enjoyable and helpful than traditional learning methods, but their retention improved when AI use was complemented by traditional learning strategies like note-taking (Kreijkes et al., 2026, as cited in Fesler et al., 2026, p. 20).

This research is extremely inconvenient for the AI-maximalist crowd and EdTech venture capitalists. Students liked the AI more. They found it more enjoyable. They thought it was helping them. And they retained less knowledge. The tool that felt better worked worse.

Learning science already has a name for this phenomenon: the difference between extraneous cognitive load (effort that doesn't contribute to learning) and germane cognitive load (productive struggle that builds understanding). The report explicitly addresses this tension, noting that some AI tools may reduce productive cognitive effort (germane load) in addition to reducing unnecessary effort, and that students may prefer AI tools that make learning easier even though engaging in cognitively challenging tasks often produces better long-term retention and transfer (Fesler et al., 2026, p. 19). When AI removes productive struggle, it removes the learning.

The "frictionless learning" promise isn't just wrong. It's actually the inverse of what learning science tells us works. The best AI tools, the report suggests, would introduce more appropriate difficulty, not eliminate it (Fesler et al., 2026, p. 14).

My take: The EdTech industry has a commercial incentive to make tools "feel" good. Engagement metrics, session lengths, positive user reviews - these are what get investors excited and convince schools to renew contracts and subscriptions. And the way you achieve those things is, to oversimplify a complex topic, through dopamine release - not authentic learning. A tool that students love while learning less is not an educational success. It is, at best, expensive entertainment and, at worst, a confidence-building exercise in incompetence. We need to be far more rigorous about measuring what matters: what students can do without the tool. If you were training for a competitive sport you wouldn’t design a meal plan around what food you thought was the tastiest - you would design for things like nutrient density, protein ratios, caloric intake and other factors. And you wouldn't train for a marathon by putting on roller skates. Learning is no different.

Myth #3: "AI Tutoring Is as Good as Human Tutoring"

What everyone believes: AI tutors can replicate, or will soon replicate, the one-on-one tutoring that research shows is among the most effective educational interventions ever studied. At scale. For free. This is the holy grail: Bloom's 2-sigma problem, solved. Pack your bags and go home, folks - we've done it.

What the Report says: Purpose-built AI tutoring tools show genuine promise, but the design of the tool is critical, and general-purpose AI chatbots (your beloved ChatGPT, Gemini, and Claude, for instance) are not tutors.

An experiment in Turkey found that students who used a general-purpose AI chatbot to study for an exam performed worse than peers who worked through practice problems in a course textbook, but that students who used a tutoring-specific AI chatbot performed the same as their textbook-using peers. In that study, the tutoring-specific tool gave hints to the student without directly giving the answer (Bastani et al., 2025, as cited in Fesler et al., 2026, p. 21).

Note what's happening here. The tutoring-specific tool didn't outperform the textbook. It just performed equivalently. Now, that's not nothing - scaling “equivalent-to-textbook” support at zero marginal cost has some very positive efficiency, equity, and cost implications - but it is a far cry from the “2-sigma revolution” that many AI tutoring platform vendors want you to believe in.

The more interesting finding comes from the Tutor CoPilot study, which gave real-time expert-like suggestions to human tutors during maths sessions. It improved student topic mastery by 4 percentage points overall, rising to 7 percentage points for students of less experienced tutors and 9 percentage points for students of lower-rated tutors (Wang et al., 2025, as cited in Fesler et al., 2026, p. 26–27).

Pay attention, because THIS is the more interesting finding. AI didn't replace the tutor. It made the human tutor dramatically better, especially those who most needed help. The AI was not the main actor. The human educator was the key educational fulcrum here, with the AI boosting their capabilities and helping to scaffold the tutoring session and provide nudges or provocations to the tutor where helpful.

This is the version of "AI tutoring" that I find genuinely exciting and genuinely plausible at scale - and it’s the version that doesn’t lead to a dystopian nightmare wherein our children spend all day being “taught” by AI chatbots. The chatbot does not replace the human. The chatbot is a legitimately helpful part of - or even “orchestrator” of - a system that elevates the human. The distinction is not semantic. It reflects entirely different assumptions about where intelligence and the creation of meaning live in a learning relationship.

My take: The 2-sigma promise is not dead, but the path to it runs through human-AI collaboration, not AI replacement. Every time I see a pitch deck promising to "democratise access to world-class education through infinitely scalable AI tutoring" I ask one question: does the evidence show that removing the human from that equation produces better outcomes? Ok, I don’t actually ask the question - that might come off as rude - but I definitely think it: silently, to myself, where it can’t hurt anyone’s feelings. Currently, the answer to that question - based on the research or lack thereof - is absolutely “No”. The evidence points emphatically in the opposite direction - toward AI that augments human expertise, not AI that substitutes for it.

To be clear also, I don’t believe that technology will be forever and completely incapable of producing a synthetic intelligence of sufficient fidelity that we can’t, eventually, completely replace a human educator in the teaching and learning process. Unless the human race drives itself into an early extinction event, it is probably an inevitability that we will, at some point, achieve such technological mastery. But by the time we reach true “human equivalent” (or “better-than-human” - however you define that) intelligence, we will certainly have much more dramatic, civilization-level questions to grapple with than “how should we use AI tutors?”.

Myth #4: "AI Will Liberate Teachers From Busywork So They Can Focus on What Matters"

What everyone believes: AI will automate the tedious parts of teaching - lesson planning, feedback, administrative tasks - freeing teachers to do what only humans can do: inspire, mentor, build relationships, and engage in the art of teaching.

What the Report says: Ok, actually, this one has some real evidence behind it. But with important caveats.

Teachers given access to ChatGPT and a guide on how to use it (I think that part is important) spent about 30% less time on lesson and resource preparation - around 25 minutes per week - with no detectable differences in lesson quality based on blind expert ratings (Roy et al., 2024, as cited in Fesler et al., 2026, p. 25). This is the most straightforwardly positive research conclusion I could find in the entire report, and I think it’s worth acknowledging and maybe even celebrating a bit. Teachers saved meaningful time. Quality didn't drop. A win is a win and this is great news for teachers.

BUT….the “hedge” is important: an AI-only automated writing evaluation system did not reduce teachers' total work hours even as student writing outcomes improved, suggesting that teachers may have reinvested time saved on routine feedback into higher-skill instructional support rather than reducing total hours worked (Ferman et al., 2021, as cited in Fesler et al., 2026, p. 25). The same study found that teachers with access to AI automated writing feedback tools discussed more essays individually with students.

This is revealing. The time didn't disappear - it redistributed toward higher-quality human interaction. Teachers didn't go home earlier. They went deeper. That’s not bad, per se, and if you work in education you know intuitively that, when given extra time, teachers will most often allocate it towards better supporting their students.

The report also found that weekly AI reports analysing classroom discourse improved pedagogical practices, such as increasing teachers' use of "focusing questions" by 20% in brick-and-mortar classrooms (Demszky et al., 2025, as cited in Fesler et al., 2026, p. 26). Similar automated feedback tools in large-scale online courses improved instructors' uptake of student ideas by 10%, improving student course satisfaction (Demszky et al., 2023, as cited in Fesler et al., 2026, p. 26).

AI support appeared to be particularly beneficial for less experienced and lower-rated tutors (Fesler et al., 2026, p. 3). This finding has profound equity implications. The teachers who need the most support - typically found in under-resourced schools - stand to gain the most from AI-assisted coaching. Traditional coaching from veteran instructors is expensive, time-intensive, and often unavailable in exactly the schools that need it most. AI-delivered, real-time pedagogical feedback could change that equation.

My take: The teacher-efficiency story is the most credible and most immediately actionable narrative in this space. It’s not the most pedagogically or scientifically “exciting” or “innovative” from an academic perspective, but it is a net positive that can allow all us to focus on the higher order stuff. But there is a risk: the same corporate interests that profit from selling AI tools have every incentive to use "teacher efficiency" as a sort of “Trojan horse” for reducing teacher numbers. Saving 25 minutes per week per teacher is a professional development story. It is not - or shouldn’t be - a staffing reduction story; but clever software sales people are likely to lean on this point in order to justify the price of their product. “Our solution may cost $50,000 a year, but you’ll be able to reduce your faculty headcount by 15% - imagine the savings!” We should be loudly pre-emptive about this distinction, because history suggests that unless educators make the case clearly, market forces and policy makers will make the wrong one.

Myth #5: "The AI Tools Kids Are Using Outside School Are Educational"

What everyone believes (in two flavours): Either (a) students are using AI to cheat and it's a crisis, or (b) students are using AI to self-direct their learning and it's revolutionary.

What the Report says: Well….we actually don’t know - and the gap in research here is surprisingly large.

Survey data suggests a rapid increase in AI tool use that seeks to replicate human relationships and cultivate emotional bonds and personal rapport with users, including children and teens (Robb & Mann, 2025, as cited in Fesler et al., 2026, p. 22). This raises important questions about the impacts of AI use outside of school on students' emotional, social, and cognitive development.

The report finds limited causal evidence either way on AI's effects on both cognitive development and student emotional or social wellness, and highlights important unanswered questions about what conditions support prosocial development when students interact with AI, and what the effects of AI social companions are on children and adolescents (Fesler et al., 2026, p. 23).

We are, in other words, running the largest uncontrolled experiment on children's emotional and cognitive development in human history, and we don't yet have the research infrastructure to even measure the outcomes.

Critically, there exists no high-quality causal research on the timely topic of developing student and teacher AI literacy (Fesler et al., 2026, p. 29). None! We are asking every school in the world to build “AI literacy programmes”, and there is zero causal evidence about what those programmes should contain or whether current versions work. This is not a reason to stop building them - we should all be actively engaging with the technology, experimenting, researching, and arguing about how best to leverage it, and trying to build a body of knowledge in support of effective implementation. But we need to build such programmes with “epistemic humility”, treating such work and “AI literacy frameworks” as well-intentioned experiments rather than validated blueprints, and, where possible, we should be baking in measurement instruments and iterative improvement processes from the start.

My take: Firstly, I was legitimately shocked by the lack of causal data in this area, especially when comparing it against the absolute hurricane of “expert” opinions, developing “best practice”, and the constant stream of email marketing I receive from companies and consultants telling me about how they are uniquely positioned to help me/my school solve all of our AI problems via their expert-developed AI literacy frameworks. Surely, given the sheer volume of people out there claiming to have figured this out - going so far as to ask for large sums of money in exchange for sharing the fruits of their wisdom - the research and best practice must be at least somewhat advanced by this point….right? Nope.

Another point that stands out is that the framing of "cheating vs. learning" is a false binary that reveals how badly we need to evolve our understanding of what education is for. If a student uses AI to complete an assignment that required them to retrieve and restate information, we don't have a cheating problem. We have a task design problem. The assignments that AI can fully complete are assignments that were never really assessing what we value. The skills that matter in an AI-abundant world - synthesis, judgement, ethical reasoning, creative problem-framing, empathy, collaboration - are precisely the skills that AI cannot perform for students undetected, because they require the student to have genuinely engaged. If your assessment is vulnerable to AI completion, the problem predates AI. In other words, your assessment design is the problem - not the students.

Myth #6: "We Have Enough Evidence to Know What Works"

What the EdTech industry believes (or wants you to believe): The evidence base for AI in education is robust enough to make confident procurement decisions, curriculum integrations, and policy mandates.

What the report says: No, we don't have enough evidence. Not even close.

Of over 800 academic papers in the Research Repository, only 20 produced strong causal evidence, and the report identifies no high-quality causal studies in K-12 settings in the US for students, and very few for teachers (Fesler et al., 2026, p. 2). Zero. No high-quality causal studies in US K-12 settings for students? The country that has arguably led the world in EdTech investment and deployment has produced no rigorous evidence that any of it is working for the students it's supposed to serve? On the one hand this is absolutely shocking. But in another, more intuitive sense…it actually seems to align with my own real world observation.

Of the 14 causal student studies that met the quality standard, seven were conducted in universities and seven with high school students spread across Turkey, the UK, Germany, Belgium, Spain, and Brazil (Fesler et al., 2026, p. 15). The report is candid that curricula, instructional practices, student populations, and technology infrastructure vary substantially across countries, and that these contextual factors may shape both how AI tools are used and how they influence learning outcomes, meaning the current evidence should be interpreted as suggestive rather than definitive with respect to US K-12 settings (Fesler et al., 2026, p. 16).

That said, the report draws a useful distinction: evidence about how tool design features affect learning processes - such as the difference between general-purpose and tutoring-specific chatbots, or the impact of reduced cognitive load on reasoning quality - likely reflects fundamental cognitive principles rather than context-specific factors (Fesler et al., 2026, p. 16). The cognitive science theme is very strong throughout this research. The context-specific implementation signal seems much weaker. Schools should be far more confident making decisions based on the former than the latter.

My take: The EdTech procurement market is an absolute disaster of misaligned incentives. Schools are making multi-million dollar decisions about AI integration on the basis of vendor case studies, enthusiastic pilot reports, and excited conference presentations. None of that stuff comes even close to meeting the threshold for properly conducted research, let alone high-quality, causal research. It’s not evidence. I am not saying schools should wait for a perfect evidence base before doing anything. AI is here and students are using it - “doing nothing” is practically criminal at this point. But I am saying that the standard of evidence we demand before changing an entire maths curriculum should be the standard we demand before deploying AI across a school. Currently, it isn't even close.

Myth #7: "The Equity Problem Will Solve Itself - AI Democratises Access"

What optimists believe: AI will be the great equaliser. Students who can't afford private tutors will now have access to world-class personalised instruction at zero cost. The achievement gap will narrow - or disappear!

What the Report says: Maybe….but the conditions for that to happen are far from guaranteed, and the current risks actually mostly point in the opposite direction towards more inequity - not less.

AI tools have the potential to provide individualised academic support at scale which could benefit students who lack access to private tutoring or other supplemental resources, but students' ability to benefit may vary with technology infrastructure, digital literacy, and whether students can access tools at home as well as at school (Fesler et al., 2026, p. 22). The report also flags that many tools are optimised for English and may provide lower-quality or biased support for English learners, and that under-resourced schools may lack funding for licensing education-specific tools, leading teachers to rely on free general-purpose systems that may be less effective or raise privacy concerns (Fesler et al., 2026, pp. 22, 28).

If you think about this for a minute, the equity paradox is actually hiding in plain sight: the students who would benefit most from high-quality AI tutoring tools are the students least likely to have access to them. The report's finding that AI pedagogical support is most effective for the least experienced teachers (Fesler et al., 2026, p. 27) is simultaneously the most hopeful and most worrying finding in the document. Hopeful, because it suggests AI could genuinely help close the professional quality gap between well-resourced and under-resourced schools. Worrying, because access to those targeted, education-specific AI coaching tools is precisely what equity-deprived schools are unlikely to afford.

My take: The democratisation narrative is not wrong, per se. It describes a real potential. But potential is far from guaranteed. The equity case for AI in education requires active policy intervention: public funding, open licensing, language accessibility mandates, digital infrastructure investment. It does not emerge automatically from market forces. Anyone selling AI as an equity solution while lobbying against the public investment that would make that equity possible is at best an accidental hypocrite, and maybe even a clear-eyed cynic trying to make a buck.

Where We Actually Are vs. Where the AI Hype Machine Says We Are

I will be very frank here.

The hype machine says we are at an inflection point - a moment of irreversible transformation where AI is already reshaping learning at scale and will, within a few years, produce fundamentally different educational outcomes for the better. This narrative is convenient for investors, for vendors, for politicians who want to appear forward-looking, and for a certain kind of “technology evangelist” who has always believed that if only we had the right technology we could finally fix all of the problems that human complexity has always resisted (and often been the cause of).

I’m sorry to tell you that we are not there yet. We’re not even close. The actual (sometimes lack of) evidence says that we are still merely at the beginning of the beginning.

As of April 2026, Stanford’s best, cutting edge educational research team managed to carefully vet and pick out only 20 causal studies from a dragnet of 800. We have findings that are mostly international and mostly short-term. We have some pretty clear confirmations that pedagogical design matters enormously. We have a clear warning that tool-dependent performance is not the same as learning. We have promising but preliminary evidence about AI augmenting teacher capacity. And we have vast, important domains such as AI literacy, long-term outcomes, equity effects, social-emotional development, and collaborative learning about which we know essentially nothing causal at all. As the report bluntly notes, the current evidence should be interpreted as preliminary and expected to evolve as more evidence emerges (Fesler et al., 2026, p. 6).

There is nothing inherently “bad” about this. It’s just the honest state of an emerging field. While artificial intelligence research has been advancing in the background for decades, generative AI tools like ChatGPT only broke into the public consciousness (and our classrooms) within the past three years. The internet was transforming education for fifteen years before we had solid evidence about what that transformation actually meant for learning outcomes. The difference with AI is that the pace of deployment has dramatically outstripped the pace of understanding. Schools are not waiting for evidence. Schools are making real-time decisions about real students while the research is still being designed.

We don’t need to and should not succumb to paralysis, but we do need to be skeptical, precise, and to maintain high standards for evidence. We should be confident about and trust in what we do know - pedagogical design matters, human augmentation outperforms human replacement, productive struggle should be protected, equity requires active intervention - and honest about what we don't. We need to approach AI integration not as wholesale adoption but as structured experimentation: clear hypotheses, honest measurement, willingness to change course. And we need to have the courage to stand in front of our peers, our students, and our families and say “We were wrong, and now we’re changing course - thank you for sticking with us on this journey” when necessary. The only thing worse than making mistakes - which are inevitable - is doubling down on them in an attempt to save face, recoup spent operating budgets, or avoid inconveniencing people.

Where I Think We’re Headed (Near and Long Term)

Near Term (1-3 years)

The teacher-efficiency story will accelerate. AI-assisted lesson planning, feedback generation, and administrative automation will become standard features of how teachers work. The evidence base for this is already the strongest in the report, and the tools are improving rapidly. This is good. Great, even. We should support it, scale it, and continue to measure it.

The "AI as tutor" story will become more differentiated. The distinction the report draws between general-purpose AI chatbots (which show concerning effects on learning) and pedagogically-designed AI tools (which show neutral-to-positive effects, Fesler et al., 2026, p. 21) will become a central design debate in EdTech. Purpose-built, guardrail-constrained, pedagogically-principled tools will start to separate themselves from the general-purpose field. Schools and districts that invest in understanding this distinction will get better outcomes.

The research explosion will produce more “noise” - most of it unhelpful or inconclusive, some of it transformative. The report notes that the number of relevant papers in this area doubled between January and September 2025 (Fesler et al., 2026, p. 9). We are about to have a lot more causal evidence, from more contexts, testing more specific design choices. The picture will get sharper.

Long Term (5-10 years)

The fundamental questions will be pedagogical and philosophical, not technical. Once AI tools are reliably available, affordable, and functional, the differentiating question won't be "which tool works best" - it will be "what kind of learning do we value, and how do we build educational experiences that cultivate it?" That is a human question. It is the question that teachers, curriculum designers, philosophers of education, and students have always needed to answer. AI is just making it a much more urgent question.

The concept of what "learning" means in formal education will evolve. The report carefully notes that almost all current studies measure short-term performance outcomes, with less known about effects on engagement, metacognition, self-regulated learning, or other longer-term competencies (Fesler et al., 2026, p. 29). But education has always been about more than performance on the next assessment. It is about developing the capacity to think, to engage, to create meaning, to participate in human life with wisdom and agency. If AI-assisted education produces students who are productive with AI and helpless without it, we will have failed, no matter what the performance metrics say. The long-term challenge is to design for genuine cognitive and social development in a world where AI is permanently available.

There will have to be a reckoning about AI as a social companion. The report flags this cautiously, noting the rapid growth of AI tools that cultivate emotional bonds with users including children and teens (Fesler et al., 2026, p. 22), but acknowledges that causal research here is limited and the stakes are high (Fesler et al., 2026, p. 23). We may not have the evidence before the phenomenon is too large to meaningfully study. This will be one of the defining ethical challenges of education - and perhaps humanity - in the next decade.

A Final Word On Humans and Machines

I am a humanist. Not in the defensive, “machines-bad” sense - I find the posthuman question fascinating and I think our definitions of what it means to be human are due for serious philosophical updating. But I am a humanist in the sense that I believe learning is, at its core, an act of meaning-making that happens between conscious beings. A student and a teacher, a text and a reader, a community and an idea. The technology is either in service of that relationship or it is in the way of it - it can not be that relationship.

What I think the Stanford report tells us, underneath all its careful methodology and diplomatically hedged conclusions, is that the tools which work best are the ones that protect and enhance the human elements of learning. The AI that gives hints rather than answers (Bastani et al., 2025, as cited in Fesler et al., 2026, p. 21). The coaching system that makes the human tutor better (Wang et al., 2025, as cited in Fesler et al., 2026, p. 26). The automated feedback that frees the teacher to have more individual conversations with students, not fewer (Ferman et al., 2021, as cited in Fesler et al., 2026, p. 25). The guardrails that preserve productive struggle (Fesler et al., 2026, p. 19).

The hype machine wants you to believe that AI will “solve” education. The evidence so far suggests that AI, used well, can help educators do what they've always done - more efficiently, more equitably, and with better real-time insight into what students need. That's actually a lot. The potential is tremendous and exciting. But it is not a replacement for the fundamentally human enterprise of teaching and learning.

The most effective future implementation of AI in education won't look like science fiction. It will look like a really good teacher - who happens to have a very smart assistant.

Students should demand no less.

Reference

Fesler, L., Martinez, J., Agnew, C., & Loeb, S. (2026). The evidence base on AI in K-12: A 2026 review. AI Hub for Education, SCALE Initiative, Stanford University. https://scale.stanford.edu/ai/repository

All research cited, figures embedded, and data referenced in this article are the property of Stanford University and/or their respective owners.

The opinions in this article are my own and do not reflect the opinions or conclusions of any of the authors of the source materials referenced or analysed in the creation of this article.

All primary studies cited in this article are cited as referenced within Fesler et al. (2026). Full citations for individual studies are available in the Report's reference list (pp. 38–40).